My whole career has been spent working on software, first as a small-time consultant in high school and now as a software engineer and manager at Rapleaf. Though I’ve always harbored an intellectual soft spot for more physical engineering pursuits, I’ve never had more than passing occasion to indulge them.

That is, until recently, when Adam and I founded 8 Bit Lit, our little lamp-making business. Together we took what we thought was a really cool idea from concept to prototype to limited production run, and I have to say that while in some ways engineering is engineering, in other ways, the work I’ve done up until now did not prepare me for the stark differences between producing a software project and a hardware one. This post outlines the three of things I found most interesting between hardware and software projects: time, scaling, and repeatability and undoability.

Time

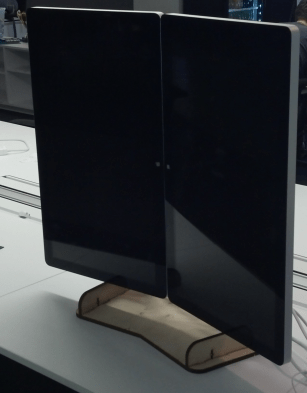

|

| The first of what would turn out to be many parts orders. |

There is a fundamental difference in the amount of time consumed by a hardware project and a software one. Everything ends up taking far more time than I expected: design and development, prototyping, assembly, packaging, delivery.

I could go on and on about each of these, but for the sake of brevity, let’s just look at the development idea as a concrete example. When you decide you want to make a little electronic widget that does something, even something trivial, you design the circuit and then figure out what parts it will take. And then, since they’re actual, physical things, and often quite specific ones, you have to wait for those parts to show up. That means waiting a for another person somewhere else in the world to put the thing you need into a box, hand it to another person who will drive it somewhere else in a truck, and so on until it arrives at your door. Even if you pay exorbitant shipping costs, you’re looking at many hours to a few days of latency between when you decide what you want to build and when you can even try it for the first time!

Contrast this with the software world. In most cases, there is no difference between your “design” for the thing and the thing itself: the source code for a website is already a website. Everything you need is already on hand in infinite supply – you can have as many for loops as you’d like, and your personal computer is usually sufficient to test what you want to accomplish. Occasionally you might find yourself needing a specialized piece of code written by someone else, but even in these cases, it usually just means downloading a library from the internet, which takes seconds to minutes.

This is a huge difference in terms of your ability to iterate quickly and try out ideas. In software, you can go from no idea at all to a functional prototype in as long as it takes you to figure out what you want and code it up. In hardware, there’s a built-in latency barrier that makes more forethought a requirement. This means your prototypes have a higher inherent cost, slowing you down.

Some would point out that this problem can be solved by “libraries” of development parts: resistors, capacitors, and ICs of all different values and function stored in boxes on shelves. In practice, though, this part library has to be vast and a little expensive to give you more than basic coverage, and even with such a library, you’re unlikely to be able to carry any of the really specialized stuff that’s coming out all the time. Again, in the software world, you get this kind of library almost for free through the internet, and even when you can’t always be connected, the sheer number and variety of libraries you would be able to keep trivially accessible is enormous.

Scaling

In many ways, the scaling process is one of the things that is most challenging to me. In software, much of scaling is handled by just throwing more processing power at the problem. Your website will have 2x as much traffic next year as this one? Double your number of servers. (Certainly there are ways in which software projects must be constructed to allow for this kind of scaling, but there are lots of frameworks designed to help you do this.)

Contrast that against the construction of a physical object. Need to make 2x as many? Does that just mean hiring 2x as many people to assemble them? Well, maybe. But it also means training those new people, and they’ll have an efficiency ramp-up period where they’ll produce less units or unshippable units or probably both. Or should you change your assembly process to be twice as efficient to create each unit in the first place? Do you have the tooling for that? Can your facility even handle 2x as many people or machines working on the products?

Software projects face some of the same problems, but the slope of their curve is so much flatter. Many, many computers can fit into a very small space and serve a great deal of requests, and as long as you’re operating from a colocation facility, there’s likely to be a large amount of space available to grow into. And that’s not even considering so-called cloud offerings, where you can trivially add more capacity to your systems with the click of a button.

Repeatability and Undoability

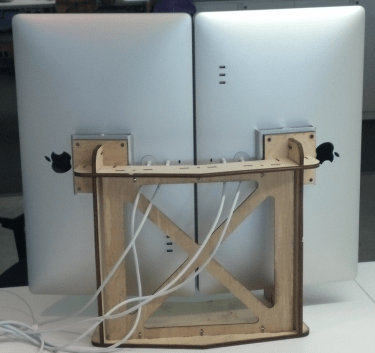

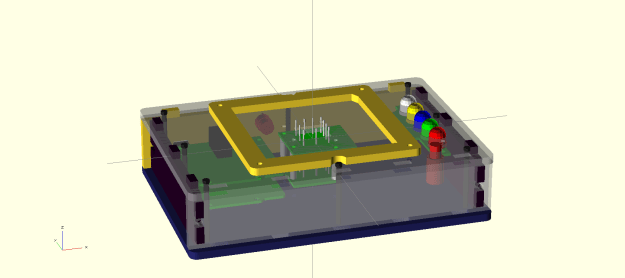

|

| Try un-etching a circuit board. |

I think these two are especially poignant. First, repeatability. I think of repeatability as the ability to have a process you’ve designed produce the same output from identical inputs over and over again without problems. In software, this is almost a given. It’s so intrinsic that we use it to make automated tests for ourselves to make sure things keep working – if the results all of the sudden come out differently, then we must have broken something. And generally speaking, the outputs of computer processes don’t degrade over time, even though their hardware does – they’ve been designed to tolerate errors or to fail very noisily when it’s impossible to keep going gracefully.

With physical processes, though, especially those done by hand, repeatability is not to be taken for granted. Even though a process may be designed in a perfectly repeatable pattern, reality insists on imposing random failures. This is because in the real world, there are far more variables than we can reasonably take into account. For instance, let’s say you’re hand-gluing the sides of a box together. Maybe this time, you didn’t use enough glue. Or maybe the clamp you’re using is wearing out, so the sides don’t get good contact. Or maybe one of the sides was made from raw materials with intrinsic flaws, invisible little cracks that doom the part to failure. These problems arise even when a person is not responsible for the actual labor – maybe your milling bit finally snaps from wear or material variations give you an unacceptable result. The bottom line is that these things are difficult to account for.

Similar to repeatability is undoability. I think of undoability as the ability to reverse an erroneous process such that you can fix things and move on. When you are working on a software project, mistakes are often almost trivial to reverse: you click the “undo” button, or you revert your changes in your source control manager, and you’re back to where you were. Because your actual work output is data – either code or the results of your processes – making copies is fundamentally cheap or free, which means that with a little care, you can trivially protect yourself from losing everything to mistakes. This makes your mistakes very cheap – so cheap, in fact, that its often reasonable to just try something that might not work out, since you can always just go back.

The real world is not so forgiving. Many physical processes are not trivially reversible, if at all. If you glue a box together inside out, then there’s a good chance it’s going to stay that way and be a total loss. Sure, a $4 plastic box may not be a big cost for a random experiment, but what about a $50 ethernet module? Now you have to think carefully about how a failed test could be made reversible. This has significant implications for design and production, if you’re used the software way of thinking of cheap mistakes. In the long run, this slows down the design process and costs you money during production.

Sometimes you can design undoability into your processes from the beginning, which can pay off big time. For instance, instead of a box you glue together, you make it fastened together with screws so you can always just take it apart if you make a mistake. However, this approach introduces its own set of costs: more complex design, more actual parts, more steps for assembly, and aesthetic compromises. You just have to balance all these factors when you’re deciding how to make your product.

Conclusion

You might think from this discussion that this experience has shown me the absolute superiority of software projects over hardware ones. Nothing could be further from the truth. Despite the difficulties, there’s definitely an element of satisfaction I’ve found from this project that isn’t something easily gotten from software, even from the coolest projects I’ve done. There’s something about producing an actual, tangible thing at the end of the day that will keep me striving to get better at the process and hone my craft, no matter how difficult it is.